Support for sparse linear algebra (and other operations) is an important part of any numerical package. ALGLIB package includes highly optimized implementation of sparse matrix class which supports rich set of operations and can be used in several programming languages, including:

Our implementation of sparse matrices has following distinctive features (see below for more detailed discussion):

1 Getting started and examples

Getting started

Examples

2 Sparse storage formats

HTS (hash table storage)

CRS representation

SKS representation

3 Basic operations with sparse matrices

Creation, copying and conversion between storage formats

Read/write access to matrix elements

4 Sparse BLAS

General (unsymmetric) sparse matrices

Symmetric sparse matrices

Triangular sparse matrices

5 Other functions

Factorizations

Linear systems

6 Downloads section

Most important pieces of sparse matrix functionality are provided by the sparse subpackage of ALGLIB package. This subpackage implements sparse matrix class, basic operations like matrix initialization/modification, and rich set of linear algebra operations (sparse BLAS).

Everything starts with creation of the sparse matrix instance. You have to decide which sparse matrix storage format to use for initialization: CRS, SKS or HTS (hash table based storage). Every format has its benefits and drawbacks:

Successfully initialized matrix can be used in one of the following ways:

Following examples (in C++, C# and other languages supported by ALGLIB) will show you how to with with sparse matrices in ALGLIB:

Sections below discuss these examples in more details.

There exist several popular sparse storage formats which are used to store sparse matrices, each with its own benefits and drawbacks. ALGLIB package implements three such formats: HTS (hash table storage, also known as Dictionary of keys), CRS (Compressed row storage) and SKS (Skyline storage).

Sparse matrix can be stored as hash table which maps keys (row/column indexes) to values of corresponding elements. Such storage format has following features:

You may see that HTS is good for fast prototyping (flexible initialization, no need to know matrix size in advance), but if you want to use sparse matrix for something, you have to convert it to one of the more efficient formats.

CRS (Compressed Row Storage) is another representation which can be used to store sparse matrix. Matrix elements are ordered by rows (from top to bottom) and columns (within row - from left to right). Such storage format has following features:

The only downside of CRS representation is that it does not allow flexible HTS-like initialization. You have to tell in advance how many elements is located in each row. From the other side, this format has better memory efficiency and supports sparse BLAS operations.

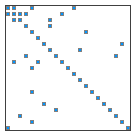

SKS (skyline storage, variable bandwidth storage) is a representation optimized for low-profile matrices. All elements which fall within skyline are stored in the matrix:

This storage format has following features:

Skyline storage format has following interesting property: its shape is preserved by Cholesky decomposition, i.e. no fill-in occurs.

Sparse matrix object can be created with one of the following functions:

If you have some already allocated sparse matrix object, and want to reuse previously allocated place as much as possible, you can use buffered versions of the functions above: sparsecreatebuf, sparsecreatecrsbuf, sparsecreatesksbuf or sparsecreatesksbandbuf. If you want to immediately free memory associated with sparse matrix object (but for some reason can not destroy instance of the class), you can use sparsefree function.

A copy of sparse matrix instance can be created with sparsecopy (or sparsecopybuf) function. An instance of sparse matrix class can also be copied and converted to some other storage format (it can also be converted in-place, although such "in-place" conversion often involves allocation of additional memory):

Other operations with sparse matrices include:

Sparse matrix modification can be performed with one of the following functions:

You can read contents of the sparse matrix with functions below:

One of the most important sparse matrix operations is calculation of matrix-vector product. Following functions are supported:

Sometimes you want to calculate matrix-matrix product A·X, where A is sparse and X is dense:

Another important case is a sparse symmetric matrix: although such matrices can be handled just as general ones, you can save both memory and time by storing just one half of the matrix (either upper or lower triangle), and explicitly accounting for the structure of the matrix. Following functions work with sparse symmetric matrices:

Finally, triangular sparse matrices are one more important case:

Triangular factorization of sparse matrices are implemented in the trfac subpackage. You can find more information on the subject at the corresponding page of ALGLIB User Guide.

ALGLIB includes several direct and iterative sparse solvers: linear conjugate gradient method, LSQR iterative least squares solver, direct SKS-based solver. More information on the subject can be found in the following sections of ALGLIB Reference Manual:

This article is licensed for personal use only.

ALGLIB Project offers you two editions of ALGLIB:

ALGLIB Free Edition:

+delivered for free

+offers full set of numerical functionality

+extensive algorithmic optimizations

-no multithreading

-non-commercial license

ALGLIB Commercial Edition:

+flexible pricing

+offers full set of numerical functionality

+extensive algorithmic optimizations

+high performance (SMP, SIMD)

+commercial license with support plan

Links to download sections for Free and Commercial editions can be found below: